- Blog

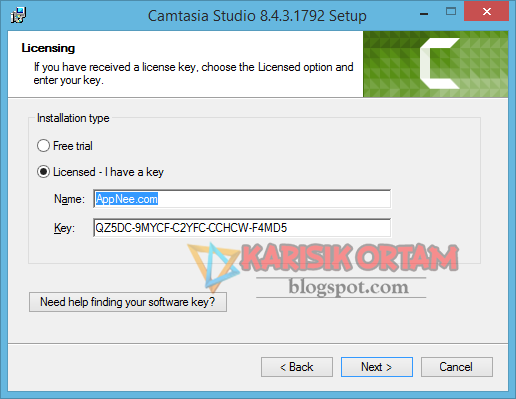

- Serial number camtasia studio 8

- Chem draw 10

- Akon right now instrumetnal

- Conjoined twins abby and brittany hensel

- Perricone no blush blush

- Animal crossing amiibo cards hello kitty

- Warning lights new holland tc33d tractor

- D2multires 1-13 download

- Alice coltrane harp improvisation

- Stylish tamil fonts for photoshop cs6 free download

- Adobe premiere pro cc 2017 free

- Band on the run rar

- Sonchiriya movie wiki

- Tascam 424 mkii 4 track review

- Geeks officially missing you guitar tab

- Smartpls 3 tutorial

- Lightscape band

- SERIAL NUMBER CAMTASIA STUDIO 8 HOW TO

- SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL KEY

- SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL NUMBER

- SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL

MetaICL:In Context Learning Meta training of. The article also shows that, MetaICL near ( Sometimes even more than ) The performance of the model is completely fine tuned on the target task training data, And better than having near 8 A larger model with multiple parameters. The author found, Yes For target tasks with domain transfer from meta training tasks, This harvest is particularly remarkable, And the use of diverse meta training tasks is the key to improvement. MetaICL Better than a series of baselines, Including situational learning without meta training and multi task learning, And zero sample migration. This method is related to recent work, This work uses multi task learning to obtain better zero sample performance in testing, However ,MetaICL Is different, Because it allows only from k Learn new tasks from examples, Without relying on task reformatting ( for example, Simplify everything into questions and answers ) Or task specific templates ( for example, Transform different tasks into language modeling problems ).Īrticle stay 142 individual NLP Data sets ( Including classification 、 Question and answer 、 Natural language inference 、 Free translation test, etc ) A large-scale investigation was carried out on 、 Diverse experiments, Go across 7 A different meta training / Target segmentation. Simply fine tuning the model in this data setup directly leads to better context learning - The model learns to restore the semantics of the task from a given example, Just as context learning for new tasks must be done during testing. From a task k + 1 Training examples, These examples will be presented to the language model together as a single sequence, The output of the last example will be used to calculate the cross entropy training loss. Each meta training example matches the test settings - It includes. In context, And evaluate on strictly new unknown tasks.

SERIAL NUMBER CAMTASIA STUDIO 8 HOW TO

MetaICL Adjust the pre trained language model on a large number of tasks, To learn how to learn. In this article, The author passes introduce MetaICL: Meta training of context learning to solve the above problems. However, Performance significantly lags behind supervised fine tuning, The result is usually a high method and it is difficult to design the templates needed to convert existing tasks to this format. This kind of learning is very attractive, Because the model only Learn new tasks by reasoning, No parameter updates are required. This works with version 8.4.1 Only.ī – TechSmith Camtasia Studio 8.4.Share the University of Washington consortium Facebook/Meta A newly published article :MetaICL: Learning to Learn In Context ( Meta training of context ).īrown Et al proved Large language models (LM) Be able to carry out context learning, They learn new tasks only through a few training examples and predict which tags are best suited to complete the test input.

SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL

SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL KEY

SERIAL NUMBER CAMTASIA STUDIO 8 SERIAL NUMBER

Know how to do it !! EASY!!: Camtasia Studio 8.4.2 licenseĬamtasia Studio 8 Crack With Serial Keygen Download: Camtasia Studio and Camtasia for This Only Crack Camtasia Studio version 8.4.1.ħ8 records - Camtasia Studio 8 serial number maker The Pirate Bay is the galaxy's most resilient ThePirateBay.TO, Download torrents, music, movies, games, apps, software and much more.

How to setup Camtasia 8.4.4 with name and serial key ? camtasia studio 8.4.1 build 1745 ? (?Ĭlick the Link to Download - dl./1/download.php?filename=.8.4.1.Serials Official TechSmith Camtasia Studio 8.4.1 Build 1745 Full SerialĬamtasia Studio 8 Serial Key Download Camtasia Studio 8 Hack Labels: 8.4.0, 8.4.1, 8.4.2, camtasia studio, crack, full version, july 2014, keyĬamtasia Studio 8 Crack Full Free Download Download Camtasia studio 8.4.1 serial > Download

- Blog

- Serial number camtasia studio 8

- Chem draw 10

- Akon right now instrumetnal

- Conjoined twins abby and brittany hensel

- Perricone no blush blush

- Animal crossing amiibo cards hello kitty

- Warning lights new holland tc33d tractor

- D2multires 1-13 download

- Alice coltrane harp improvisation

- Stylish tamil fonts for photoshop cs6 free download

- Adobe premiere pro cc 2017 free

- Band on the run rar

- Sonchiriya movie wiki

- Tascam 424 mkii 4 track review

- Geeks officially missing you guitar tab

- Smartpls 3 tutorial

- Lightscape band